How to Choose the Right AI Prompts to Monitor for Your Hotel

At some point in the past year, someone in your organisation raised a concern about AI visibility. It may have originated from a tool demo, an email, a LinkedIn post, or an article forwarded with a short note attached. The conclusion was likely the same: guests are searching differently, AI is shaping what they see, and you are not doing enough about it.

The response that follows is usually predictable: monitor more prompts, create more content, and try to be visible in more conversations. The assumption underneath all of this is rarely questioned. If an AI tool shows a gap, the gap must be real.

Why This Narrative Took Hold

AI visibility tools need to demonstrate value quickly. To do that, they scan your website, generate a set of “relevant” prompts, and then show where your hotel is not being surfaced across AI platforms. The output is usually a long list of missed opportunities, or what appear to be missed opportunities. Structurally, that makes sense. The system is doing what it is designed to do.

The issue is that most of these tools are not built for hospitality. They operate across industries where geography is often secondary, and where appearing in broad, generic queries can still carry commercial value. Hotel demand does not work that way.

A boutique hotel is not competing globally for “luxury hotel” visibility. It is competing within a very specific demand pool, shaped by location, seasonality, pricing level, and guest intent.

When that layer is removed, the prompts generated by these tools start to drift away from how real travellers search, and more importantly, how real bookings happen. This is where most perceived “AI visibility gaps” begin.

The Problem Is Not Visibility. It Is Prompt Selection

Most AI monitoring setups start from the prompts suggested by the tool itself, usually derived from your website content and expanded through generic keyword logic. On the surface, this feels logical. In practice, it creates a distorted view of where your hotel should realistically appear.

The core problem is that these prompts are not grounded in how hotel demand actually works. In hospitality, geography is not a modifier. It is the foundation of the search. A user looking for a boutique hotel, a honeymoon resort, or a beachfront property is almost always doing so within a defined location, even if that location is loosely expressed. The decision is constrained by destination first, and only then refined by hotel type, price, or experience.

When prompts are generated without that structure, they become commercially irrelevant. Hotels end up being evaluated against generic queries they were never realistically competing for, and visibility is measured in conversations that do not lead to bookings.

What appears as underperformance is usually a mismatch between the prompts being tracked and the way demand is actually formed.

A Visibility Gap That Does Not Exist

One of the more common patterns we see in AI monitoring tools is the surfacing of highly specific, but structurally irrelevant prompts.

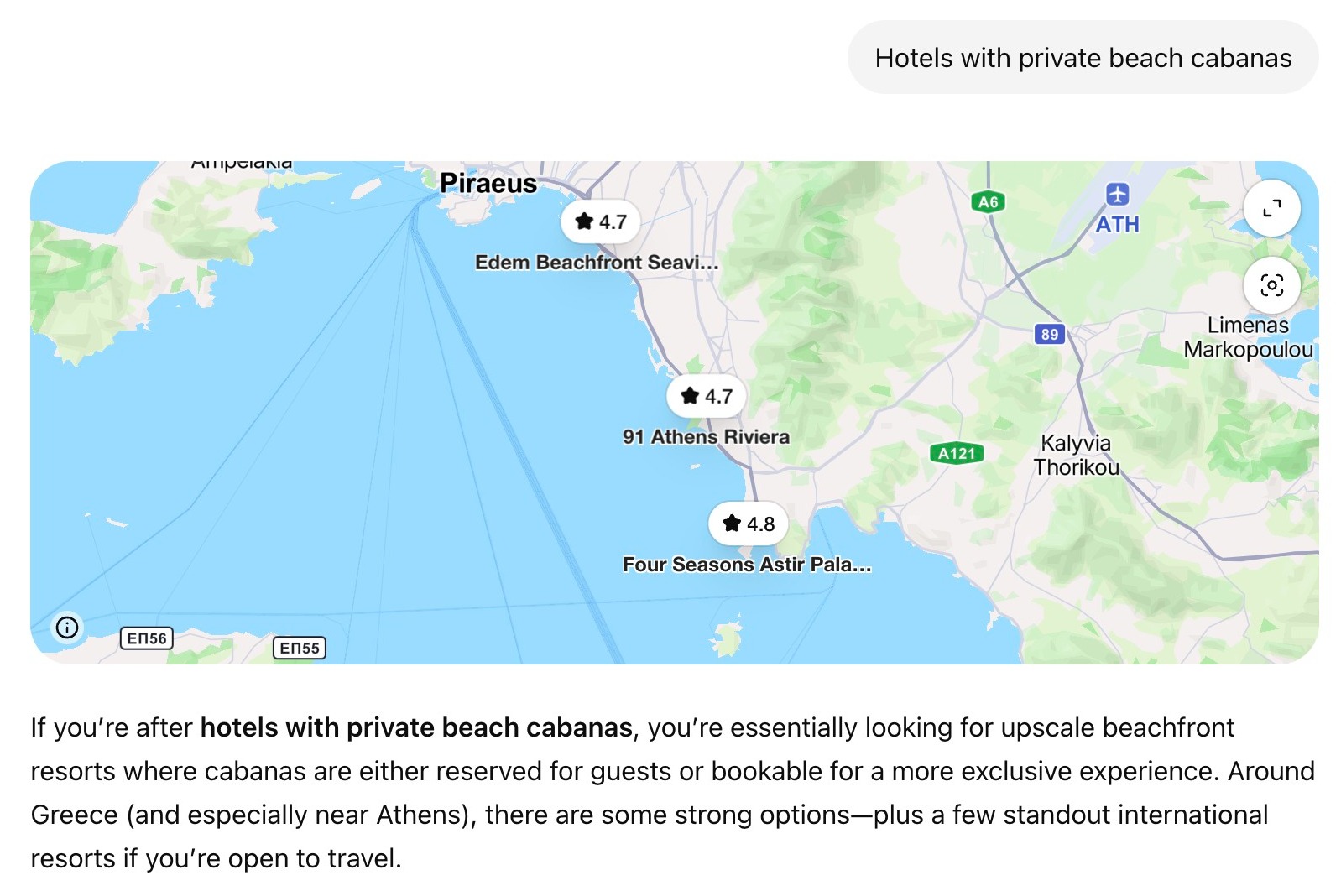

For example, a hotel may be flagged as having no visibility for a query like “hotels with private beach cabanas” or “design-led beachfront hotels,” with no geographic qualifier attached. On paper, the match looks logical. The hotel may offer exactly that experience.

In reality, the prompt sits outside any meaningful demand context.

A traveller searching for a beachfront hotel is not doing so globally. Even when location is not explicitly stated, it is implicitly defined. The decision is anchored to a destination, a region, or at the very least a shortlist of possible locations. Without that anchor, the query does not reflect how a booking decision is formed.

This is where the gap becomes misleading. The tool identifies absence in a conversation that is not commercially viable. The hotel is not being surfaced because it does not belong in that context, not because it is underperforming.

Acting on this kind of signal typically leads in one direction: more content. New pages, new articles, expanded copy, all designed to “cover” these prompts. From a resource perspective, this is where the cost begins to accumulate.

If the prompt itself has no realistic pathway to discovery, then the content built around it will not either. It may exist, it may even be technically well written, but it will not be surfaced and it will not contribute to bookings.

Why Monitoring Still Matters (When It Is Structured Properly)

The conclusion here is not that AI monitoring has no value. It does. The behaviour of these systems is still evolving, outputs are unstable, and what appears today may not appear tomorrow. Sources shift, rankings move, and different models surface different interpretations of the same hotel.

There is value in observing that movement, particularly if you are investing time or budget into content, positioning, or distribution. Without monitoring, you are operating without feedback.

The problem is that most monitoring setups introduce noise before they introduce insight, and the source of that noise is almost always the prompt set itself. When the prompt set is too broad, too generic, or disconnected from how demand actually forms, the output becomes difficult to interpret. A hotel may appear inconsistent, underrepresented, or volatile, when in reality it is being measured across a set of queries that have no shared commercial logic.

Monitoring only becomes useful when the prompts being tracked reflect real decision-making contexts. When that alignment exists, changes in visibility start to mean something. You can identify whether positioning is improving, whether certain attributes are being picked up, and whether your hotel is entering or dropping out of relevant comparisons. Without that alignment, monitoring becomes a reporting exercise rather than a decision-making tool.

A Practical Framework for Choosing AI Prompts for Hotels

If the value of monitoring depends on the quality of the prompts, then prompt selection becomes the core task.

In practice, this does not require scale. It requires structure. Most hotels do not need dozens of prompts. They need a small set that reflects how guests actually discover, evaluate, and compare properties.

1. Branded Prompts: What AI Thinks It Knows About Your Hotel

When you ask an AI system about your hotel directly, you are testing what it knows, where it is sourcing that information from, and how confidently it presents it. This includes basic facts, but more importantly, tone, positioning, and sentiment.

This is where inconsistencies start to become visible in a more meaningful way. Descriptions may lean too heavily on third-party sources, positioning can feel flattened or generic, and the elements that actually differentiate the hotel are often underrepresented or missing altogether.

If the system does not understand what your hotel’s core features are or what its guest profile is, no amount of broader visibility work will correct that. If the foundation is wrong, everything built on top of it is built on someone else’s version of your hotel.

Before monitoring how AI describes your hotel, it is worth checking what it has to work with. Our free Hotel Entity Explorer audits the structured data and source alignment shaping how your hotel is recognised across Google, your website, and the systems feeding AI discovery.

Example prompts: “Tell me about [hotel name]” / “What are the reviews like for [hotel name]?”

2. Comparative Prompts: Where Your Hotel Sits in the Decision Set

Comparative prompts introduce a decision context. Instead of asking about your hotel directly, you frame the query as a guest would when weighing options, considering your property alongside alternatives in the same destination or category.

For most hotels, this layer is more revealing than branded prompts alone. Guests rarely choose a hotel in isolation. They compare, they shortlist, and they trade off.

The key question is not whether your hotel appears in these comparative moments, but how it is framed. Which attributes are highlighted, what trade-offs are implied, and whether it is presented as a strong option within that set or a secondary alternative.

This is where positioning becomes visible. If the system consistently associates your hotel with weaker attributes, mismatched price tiers, or less relevant competitors, the issue is not visibility in general. It is how your hotel is being understood within the competitive set.

Example prompts: “We are considering [hotel name], what are some similar options in the area?” / “Compare [hotel name] with other boutique hotels in [location]”

3. Service + Location Prompts: Where Real Demand Exists

Service-based prompts on their own describe a type of hotel, but not a decision. A query such as “adults-only boutique hotel” or “design-led beachfront resort” may match your property, but it does not reflect how a guest actually searches.

In hospitality, intent is almost always anchored to a place. Even when a location is not explicitly stated, it is implied. The guest already has a destination in mind, or at least a shortlist of destinations, and is refining within that context.

Once location is introduced, these prompts become commercially meaningful. “Adults-only boutique hotel in [location]” or “beachfront resort in [destination]” reflects a real demand pool, with a defined set of alternatives shaped by seasonality, pricing, and local competition.

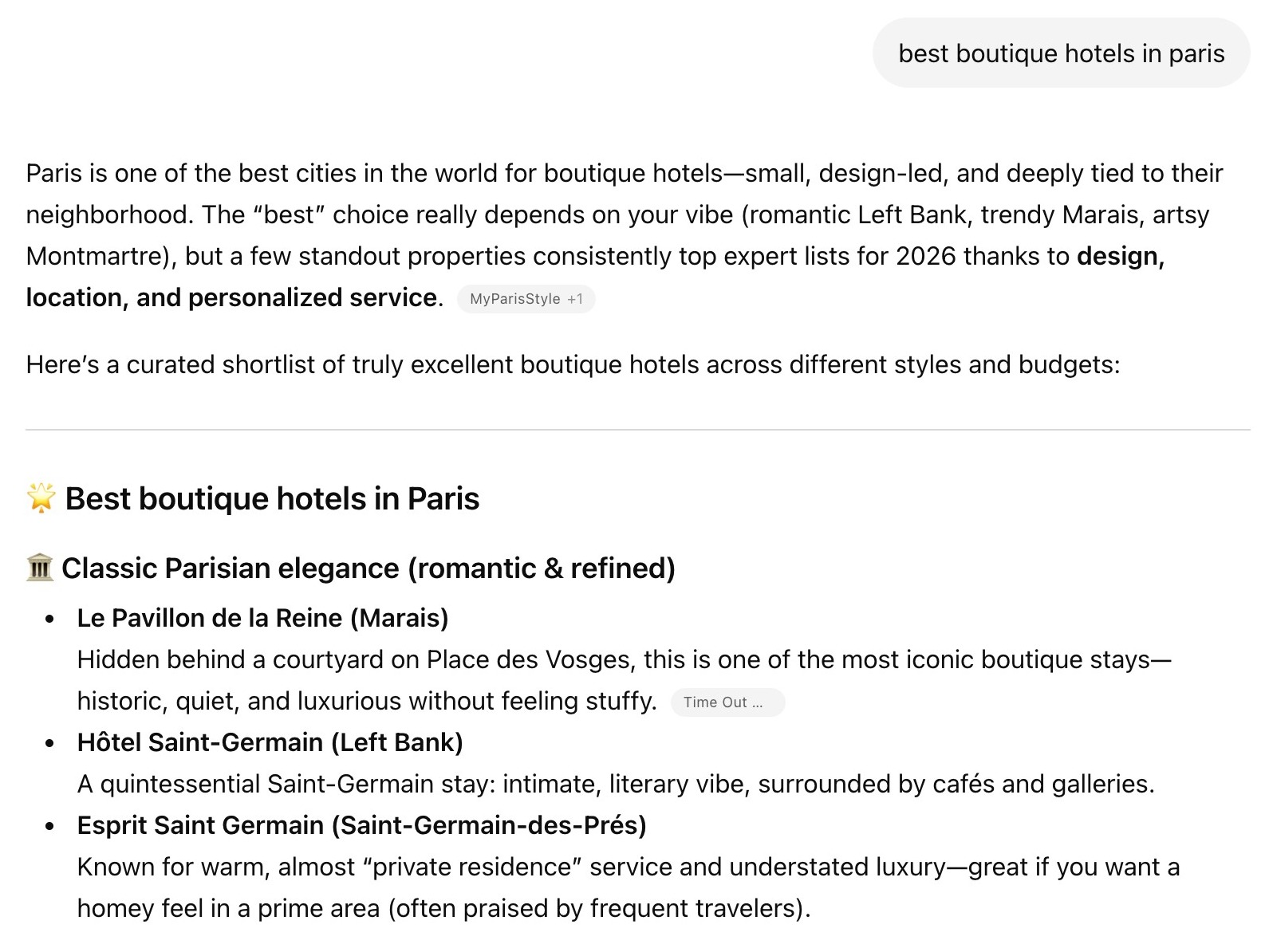

Within this category sits an even more important subset. Queries such as “best boutique hotels in [location]” or “top resorts in [destination]” represent a different stage of intent. At this point the user is no longer defining what they want. They are actively forming a shortlist.

This is where AI systems move from description to recommendation. They consolidate options, apply implicit ranking logic, and present a set of hotels that are positioned as viable choices.

From a monitoring perspective, this is one of the clearest signals available. It shows not only whether your hotel is included, but how it is framed relative to others. Which competitors appear consistently alongside you, what attributes are emphasised, and whether you are presented as a leading option or a secondary alternative.

Together, these prompts form the core of any monitoring setup. They sit closest to real user behaviour and provide the strongest link between visibility and potential booking impact.

Example prompts: “best boutique hotels in [location]” / “Where should I stay in [location] for a honeymoon?” / “Top adults-only resorts in [destination]”

4. Pricing & Offer Prompts: What the Market Is Being Told

When a user asks about pricing, value, or offers, AI systems are no longer describing your hotel. They are shaping expectations before the user even reaches your website. At this stage, the source of the information matters as much as the content itself.

AI responses in this category are often compiled from a mix of inputs. Your own website, your booking engine, OTAs, and third-party listings can all influence what is presented. The system does not distinguish between “official” and “secondary” sources in the way a hotel would prefer.

Monitoring these prompts allows you to see how that mix resolves in practice, whether rates are being presented accurately and whether current offers are being picked up at all.

This is where inconsistencies can have a direct commercial impact. If pricing is misrepresented, or if outdated offers are surfaced, the expectation set before the click is already misaligned. By the time the user reaches your site, you are correcting a narrative rather than reinforcing it.

Unlike broader visibility prompts, this category is less about expansion and more about control. It helps ensure that when your hotel is being evaluated on price and value, it is being represented in a way that reflects your actual commercial strategy.

Example prompts: “When are the best deals for [hotel name]?” / “Does [hotel name] have any offers at the moment?”

5. Strategic Prompts: Where You Can Enter Adjacent Demand

Not all valuable visibility sits within your immediate location or core demand. Some of it exists in adjacent demand pools, where a user is anchored in one option but open to alternatives. They are not abandoning the type of experience they want. They are reconsidering where to find it.

If your hotel offers a comparable experience at a similar quality or price level but in a different location, there is a realistic pathway to appear in that conversation. Not as a direct competitor, but as a viable alternative. This is where prompt selection becomes more strategic. Instead of asking “where should we rank?”, the question becomes “which conversations could we reasonably enter?”

Unlike the other categories, these prompts cannot be generated by a tool. They require someone who understands the hotel, the market, and how demand flows in and around the destination. The prompt has to reflect a real scenario that real guests actually find themselves in.

A hotel in a less prominent destination may sit within a legitimate alternative demand pool for guests anchored to a nearby, better-known location that has become too crowded or too expensive. The prompt that captures that does not sound like a search query. It sounds like a guest: “We love going to [popular destination] but it gets so busy in the summer, where else could we go that is similar but quieter?”

Content built around these prompts can be directly justified. If you are monitoring the right scenarios, you will see when that positioning starts to take hold.

Example prompts: “We love [popular destination] but it gets too busy, where else could we go that is similar but quieter?” / “Hotels like [well-known property] but somewhere less crowded and less expensive?”

Not All Prompts Deserve to Be Monitored

Once you start structuring your prompts into clear categories, a second layer of discipline becomes necessary. Not every plausible prompt is worth tracking, even if it appears relevant at first glance.

Monitoring is not free. Most AI visibility tools charge based on usage, volume of prompts, or depth of analysis. Expanding your prompt set without control increases cost directly, often without improving the quality of insight.

More importantly, monitoring is rarely the end point. It is the starting point for action. If a prompt shows weak or no visibility, the natural next step is to respond. That may involve creating content, adjusting positioning, improving data sources, or refining distribution. All of these carry a cost in time, budget, or internal resources.

If the prompt itself does not reflect a real decision context, then both the monitoring cost and the response cost are misallocated. You are paying to track something that does not matter, and then potentially investing further to try to improve performance in a space that has no meaningful link to bookings.

The discipline, therefore, is not just analytical. It is financial. A smaller, well-defined set of prompts reduces monitoring cost, but more importantly, it limits where you are likely to invest resources afterwards.

The Kollective AI Prompt Framework for Hotels

Most hotels have tested at least one AI monitoring tool at some point. The test usually surfaces a list of prompts the tool has chosen, a set of gaps, and a sense that something needs to be done. Without a structured prompt framework going in, that data is difficult to interpret and the tool gets abandoned.

The framework above is designed to change that. It gives you a controlled, meaningful prompt set from day one, so the data you collect has commercial logic behind it and the test actually tells you something that is actionable.

When setting up your initial prompt set, use this as your framework:

- One or two branded prompts to understand how AI systems currently describe your hotel and where they are sourcing that information.

- One or two comparative prompts to see how you sit within your competitive set.

- A focused group of service and location prompts, always with a geographic anchor.

- One or two pricing and offer prompts to validate what the market is being told.

- Where relevant, a handful of strategic prompts built around the specific demand conversations your hotel could realistically enter.

If you would like to test this framework in practice, we can set up a complimentary seven day run in our AI monitoring tools and walk through the results with you.